Clinical predictive models can help physicians and administrators forecast hospital readmission and other healthcare factors, influencing decisions about patient care. While existing computer models rely on structured data neatly organized in tables, the resources required to organize data from clinical notes have made it challenging to deploy models in real time.

Advances in artificial intelligence (AI) with large language models (LLMs) have the potential to resolve this by using an LLM to read and interpret physicians’ clinical notes.

In a study recently published in Nature, NYU Langone Health researchers used unstructured clinical notes to train NYUTron, a medical LLM, on 10 years of system-wide electronic health records (EHRs). The tool—currently in use in NYU Langone hospitals to predict the chances that a patient who is discharged will be readmitted within a month—is being trained for a wide range of other tasks.

The study is the first application of an LLM successfully deployed in real time in a clinical setting.

“Our hypothesis was we can use large language models as the primary means of interfacing with the EHR. We can then take the wealth of unstructured data, use the LLM to interact with it, and build other tools on top of it.”

Eric K. Oermann, MD

“Our hypothesis was we can use large language models as the primary means of interfacing with the EHR. We can then take the wealth of unstructured data, use the language model to interact with it, and build other tools on top of it,” says Eric K. Oermann, MD, senior author on the study.

Real-Time Predictions

For the study, the researchers evaluated five predictive tasks: 30-day all-cause readmission, in-hospital mortality, comorbidity index, length of stay, and insurance denial. Across the five tasks, NYUTron had an area under the curve (AUC) of 78.7 to 94.9 percent, with an improvement of 5.36 to 14.7 percent in the AUC compared with traditional models.

“NYUTron has the potential to predict many care factors for which no predictive models currently exist.”

“NYUTron has the potential to predict many care factors for which no predictive models currently exist. Or they do, but NYUTron works better, because the model is exposed to things that existing predictive models cannot access,” says Dr. Oermann.

LLMs for Medical Use

LLMs are most famous for their generative capacities in tools like ChatGPT. Beyond generative AI, they include a wide range of functionalities, including the ability to read and make meaning of data.

Instead of generating content, NYUTron reads, interprets, and utilizes existing notes written by physicians in the EHR to make predictions. This advancement allows researchers to build a comprehensive picture of a patient’s medical state to make more-accurate predictions that inform patient care.

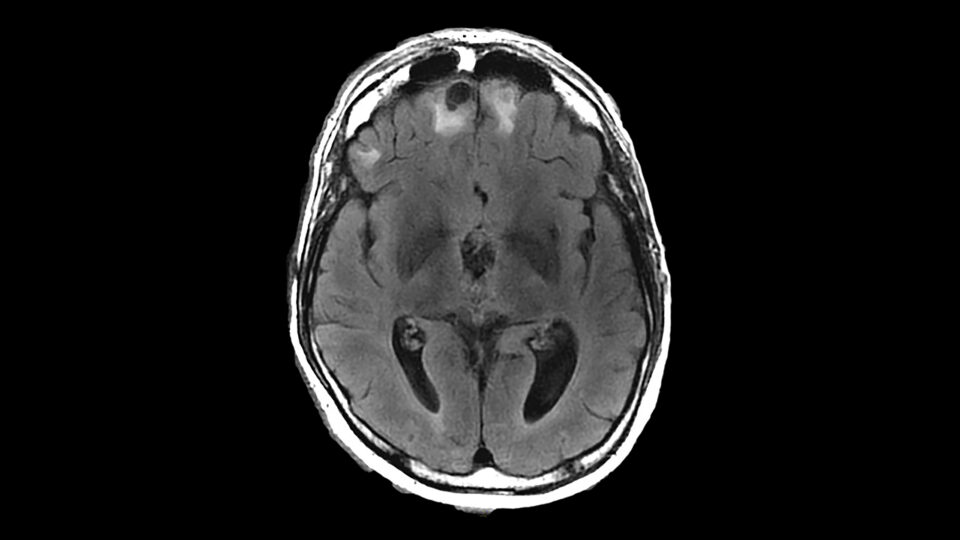

The study had four steps: data collection, pretraining, fine-tuning, and deployment. Data included 7.25 million clinical notes from over 387,000 patients across four hospitals. This resulted in a 4.1-billion-word dataset, NYU Notes, that captures data from January 2011 to May 2020.

Relative to many industry LLMs, this is a small dataset. The promising results from the study indicate the potential of research with smaller, more focused LLMs as opposed to generative models pretrained on large, nonspecific datasets.

30-Day Readmission

Of the five areas researchers examined, the 30-day readmission prediction was the one they highlighted, “mainly due to the historical significance,” says Dr. Oermann. “People have been trying to predict 30-day readmission since the 1980s.”

Real-world patient outcomes are tied to 30-day readmissions, making it significant for patients, clinicians, and hospital administrators.

Researchers deployed NYUTron in real time to assess how well it predicted 30-day readmission against six physicians. NYUTron performed better than physicians and against other LLMs that were not pretrained on clinical notes.

Generalizability Across Hospitals

Developing and training AI models that retain performance across locations and medical populations has been a challenge with AI in healthcare.

“With our experiments, as expected, there was a performance drop between Tisch Hospital in Manhattan and NYU Langone Hospital—Brooklyn, because the patient populations are different,” Dr. Oermann says. “We salvaged the performance drop by fine-tuning the model locally.”

Beyond the five tasks evaluated in the study, the research team is working on around a hundred potential new tasks for the LLM to evaluate with the help of the Predictive Analytics Unit at NYU Langone. Additionally, the researchers are developing a consortium to share these techniques with other academic medical centers.